Dr. Amy Finkelstein and team have a special report in the New England Journal of Medicine. They conducted a rigorous randomized control trial of the Camden Coalition of Healthcare Providers. The Camdem Coalition has gotten a ton of press about their “hot spotting” method of identifying folks who use a lot of medical services and then wrapping these folks in a bundle of care coordination and some social services. Prior assessments had found that using a simple pre/post analysis of the individuals included in the program that this approach saved a lot of money and reduced hospitalizations. Finkelstein and her team conducted a random assignment evaluation to see what is happening without intervention and therefore what the intervention is doing. And their initial findings of looking only at re-admission to hospital is that the hot spotting approach is not doing much:

RESULTS

The 180-day readmission rate was 62.3% in the intervention group and 61.7% in the control group. The adjusted between-group difference was not significant (0.82 percentage points; 95% confidence interval, −5.97 to 7.61). In contrast, a comparison of the intervention-group admissions during the 6 months before and after enrollment misleadingly suggested a 38-percentage-point decline in admissions related to the intervention because the comparison did not account for the similar decline in the control group.

WOW

So what is happening?

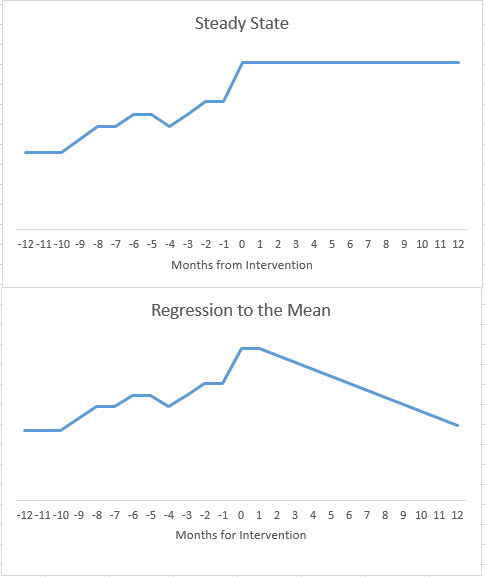

It all depends on the shape of the assumed counterfactual. Comparing people against themselves has an implicit assumption that the crisis that prompted their eligibility is a permanent phase change and the new steady state. Any change from that steady state could then be attributed to the intervention.

Another shape of trajectory could be reasonably assumed. We could hypothesize that a spike is often just a spike and it will naturally recede. A spike will trigger the intervention but at least some if not all of the future decline in utilization/re-admissions/costs could/would have occurred even if no intervention happened.

Figuring out the right counterfactual and therefore the shape of the response without an intervention is critical to evaluation. The Finkelstein study shows that the observed response of people with a huge spike is a natural decline in utilization with or without intervention. This change in the shape of the counterfactual is critical to this evaluation that shows that this program of hot spotting on at least this metric of re-admission is not doing so hot.

Good evidence is critical. Most experiments will either return null results or return slightly significant results. That is okay. My priors have changed significantly in the past sixteen hours. Hot spotting has a very plausible and facially coherent logic model of change but the evidence this morning is saying that the plausibility of actual results is far lower now than it was before the evaluation was published. This is a big deal.

WaterGirl

David,

Either “priors” is shorthand in the profession, and I am not familiar with it, or this is an autocorrect gone wrong.

Felanius Kootea

Does it matter whether the patients had chronic versus acute conditions? Did they tease that apart or is it not relevant?

Bill Hicks

I believe Kevin Drum has pointed out something similar regarding research related to whether having health insurance improves your health. Some studies have looked at death rates/life span over a span of five years or so to see if death rates drop when you have life insurance and it often doesn’t or not much. But as Kevin points out, there is a lot more to health than death rates and when you look at other metrics, health insurance is obviously better for your health. Perhaps the return percentage of these hotspot individuals is not the best metric and overall costs and health of the individuals needs to be considered. Or perhaps it was in the body of the paper, I could only access the abstract.

David Anderson

@WaterGirl: It is a reference to the Bayesian statistical concept of “prior belief”

It is a geeky way to say “I’ve changed my mind”

Another Scott

I heard a bit about this on the radio. I haven’t read the study – thanks for this.

In general, I would be very, very careful about taking hospitalizations as a metric, especially if lots of older people were part of the study. Anecdote – When one of J’s parents was in a nursing home for rehab after an illness, we were visiting and the nurse on duty was having some issues and said, in response to some general questions we had, something like, “well, if you don’t like what we’re doing here, I can call an ambulance and have him admitted…” IOW, it was a way to get the patient out of her hair rather than spending more time with them and their family members.

(And that was one of the best nursing homes we dealt with … :-/)

I can’t believe that that’s a rare occurrence.

Studies like these probably work better in making comparisons between systems, not by taking a “random” grouping within a particular system – there’s too much risk that the groups aren’t really the same. IOW, if Swedelandia has better has better outcomes with lower costs for people aged 65-90 than the USA, maybe look at how they run their medical system for people in that age group. Is it that those people are healthier to being with? That they have more, but lower paid, medical staff (so that staff isn’t overworked and pressured to make instant diagnoses)? Etc. Of course, there are many more variables to consider in such a study…

Still, more data is better, and examining one’s priors (former beliefs and biases) is always a good thing.

My $0.02.

Cheers,

Scott.

Immanentize

I heard about this last night on the radio…. They had one anecdote which indicated that the people who fell out of the Camden program did so primarily because the hotspotting wasn’t really all that comprehensive. Lost housing seemed to be a primary (as we know) indicator of random expensive care — the anecdata guy they chose was a diabetic with alcohol addiction issues. He lost his house and over the next 3 years went to the emergency room 70 times. Got back in housing, and has been clean for 2 years with diabetes under control.

What I gathered from all of this is hotspotting is health effective. Just not cost-effective.

justawriter

I can see this. I know several folks with moderate to severe conditions that were not diagnosed correctly at first and so had several health crises before they got the proper medication and either recovered or were able to manage their conditions. One, going back 50 years or more, was a friend’s father who was diagnosed with an ulcer and put on a bland bread and milk diet – turned out he had celicac disease and was lactose intolerant.

WaterGirl

@David Anderson: That works, thanks!

Feathers

What I’m seeing is that the random assignment is the issue. People with knowledge and insight choosing who gets “hot spotted” is an entirely different situation from people fitting a certain set of variables being randomly assigned. The study is showing that “hot spotting” can work, but it is not something which can be “deskilled” and relegated to an algorithm. Teresa Nielsen-Hayden’s “Fruit Punch Czar” post comes to mind. Upon rereading, not an exact match, but I think it brings insight to the people side of the issue.

weavrmom

There is a big program in my County health system doing hotspotting on high ER users, and they will do their own evaluation. In my contact with these folks, they have numerous issues, as mentioned by others here, including housing/transportation/mental health/drug use/etc. The idea is to connect them with all needed available services to stabilize their lives and assist them in also getting the help they need in an ongoing, non-emergency way. Not sure how the numbers will crunch, but hospital admissions are only part of the bigger picture.

Wag

Correlation does not imply causation. Just because there is a correlation between aggressive case management and a decrease in hospitalizations does not imply that the aggressive case management caused the hospitalization decrease. The fact that a randomized controlled trial demonstrates this is not surprising.

I think the what gets lost in this, however, is that there is value for case management above and beyond decreased hospitalizations. And our university-based practice, we have had excellent case management for the past couple of years. The case managers do an excellent job of following up to make sure that appropriate referrals for home health and physical therapy are happening, and it is my sense that overall, patients are doing better than when weWe’re relying on a peak bureaucratic systems to get patients into the appropriate follow-up care. I think the case management is an excellent thing, but we are trying to measure the wrong outcomes by trying to measure decreased hospitalizations.

weavrmom

@Wag: I so agree with your points. Good life/health care outcomes for improved quality of life are part of systems we should strive for, rather than ones based only on profit. It’s very important to know what works, and manage resources, but case-management is crucial to quality of care, imho.

Ella in New Mexico

Haven’t read the details of the study, but could it be they made the criteria too broad? Also, should the window of monitoring been longer? One year with these types of complex patients is no where near long enough to fix the multiple factors that feed into readmission. Especially if they included younger populations who had chronic homelessness, drug/alcohol abuse or psychiatric co-morbidities. Do you have any idea how long it takes to get into public housing in most communities? Homeless people re-present for admission because not having a regular residence is incompatible with the behavioral and health changes needed to stay out.

Also, the people we see targeted by this kind of research may be more likely to get drastically sicker with complications the longer their condition exists, particularly those who are elderly or who have advanced end organ damage from diabetes. I’m betting the wrap around service folks would fair better over say, three years than those not receiving it.

I’ve seen/been a referrer for some programs like these in this in my world, and I will say there’s broad “triaging” of these patients into the very sickest and highest risks for complications, non-compliance, exacerbations, etc. because of the spectrum of people who present with these illnesses. That’s because as healthcare workers we deeply want to help the typical chronically ill patient avoid readmissions and are all too happy to sign them up for these fantastic wrap around services BECAUSE IT’S WHAT IS GOOD FOR THE PATIENT. It should be a standard of care to evaluate most of them for some or all of these services.

See, this is what good healthcare looks like. Sometimes you do what costs the same or even more in order to help people have the best quality of life they can achieve, even with serious diagnoses, because it’s what is best for patients , their families and for society. As long as we keep trying to justify good healthcare to a system that only plays by the rules of a profit motive we’ll see that very same “capitalistic” system stifle real innovation and improved care.

Barbara

Because many health expenses are primarily caused by social factors and providing more, even better, health care does not address underlying social causes. Housing and mental illness are particularly intertwined, but homelessness contributes to other chronic diseases because it removes control over many facets of a person’s life, like diet and sleep. Yet, many social programs are designed to make housing into almost a kind of reward for people who successfully manage mental illness or drug addiction without apparent recognition that homelessness is so stressful it can all by itself cause acute psychotic episodes or make the need for relief through drugs of abuse seem overwhelming. The reason to address homelessness is obviously, because it is the right thing to do but ALL BY ITSELF it would likely lower the incidence of many health care and other social costs.

For that reason alone, this study proves something really important, and it is not a failure at all.

jl

Thanks for informative post and interesting comments. The conclusion is consistent with previous research of longitudinal expenditure patters, at least for large expenditures for serious conditions. People move out of serious health states at random, given their pre-existing clinical risk. There is persistence in the large expenditures, they decay back towards the norm. So, I agree with David on this, and thank him for an intuitive explanation of ‘regression towards the mean’.

I think the lesson here is not that preventive care, or creating special protocols and case management programs for high risk people is ineffective, but by the time large expenditures occur, it is too late, and people are on a fairly predetermined clinical course, on average.

@Feathers: randomization is necessary to separate causation from correlation problem. Maybe my explanation above helps.

jl

@Another Scott: You make a good point. It is difficult to measure true health care costs in a health care system as fragmented as the US. But hospital expenditures are 2/3 or more of health care costs, so I think the study’s conclusions are important, and the outcome measure is meaningful.