Crossposted at Inverse Square. (Most of what I post there comes here as well, though not quite all. There’s no paywall, so if y’all would like to be notified when something goes up, that’s where you can do so.)

———————

I teased this story in a post last week: in 2024 a team of researchers from the University of Gothenberg in Sweden put up two papers on a preprint server, describing yet another new malady induced by our our screen-dominated age: bixonimania.

In that literature bixonimania was presented as a minor condition, something to be aware of more than worried about. In the papers it was described as the result of overexposure to blue light—as in that which shines off the screens so many of us are glued to these days. It manifested itself when a victim rubbed their eyes too much, leading to the not-very-terrfiying symptom in which the patient’s eyelids turned a faint pink.

So—one more morsel tossing in the sea of major and minor results cast into the scientific publication ocean. A discovery that would be of no great import to anyone beyond, perhaps, a tiny cohort of specialists.

Except for one thing…

It was all a lie.

The papers were invented; the lead author was a fake, complete with an AI generated photo, and the Swedish scientists who created the fictional reports weren’t ophthalmologists but researchers interested in the brave new world that AI—specifically large language models (LLMs)—are creating for us.

What they found is first funny and then chilling. The whole story is well told by Chris Stokel-Walker, writing in Nature. Within weeks of posting the two fakes, major LLMs started to refer to bixonimania as real. Bing, Gemini, Perplexity, ChatGPT—they all fell for it. As Stokel-Walker documents, in time, the “disease” migrated into the published scientific literature, leading to the embarrassment of a retraction.

The funny part is that the Swedish team, led by Almira Osmanovic Thurnström, larded their hoax with brutally clear signals that something was awry. Among the ones Stokel-Walker listed, my favorite came in one the acknowledgements sections: a shout out to “Professor Maria Bohm at The Starfleet Academy for her kindness and generosity in contributing with her knowledge and her lab onboard the USS Enterprise.” That should give one pause, shouldn’t it? And if that weren’t enough, the researchers included lines like “this entire paper is made up.”

Nuff said.

Except, and this is the proximate point of this post, this is a reminder that LLMs are not in fact thinking machines. They are simulations of reasoning beings, built not on comprehension but statistical inference. And as such, they are, clearly game-able.

So that’s one worry: what we know will become what we might know, as mis- or disinformation pollutes the data which feeds our seemingly hyper-competent AI assistants. The possibilities for actively malign outcomes are obvious. Explicitly bad actors could game the LLM ecosystem by injecting supporting “data” for their particular grifts by spamming the online literature with seemingly plausible scientific results. If we’re talking medicine and health, the harms that could flow from such scams are frightening.

But beyond that concern, real and major as it is, there was a more general issue raised for me by bixonimania’s arrival on the scene: how it will play into the war on expertise built in to the “do your own research” strain of “alt-health” folly.

That notion: that experts conceal facts for corrupt reasons and a dedicted amateur researcher can penetrate behind that wall of lies to the “real” story of vaccines—which is what I’m currently most obsessed with—lies at the heart of the powerful and effective messages delivered by anti-vax influencers, led by their grifter in chief, Robert Kennedy Jr., and those around him.

The flaw in the notion that simple sitzfleisch and the ability to run searches on the internet will reliably reveal the truth about vaccination is that damned hard to assess information about particular vaccines and the scientific disciplines that underly the study of vaccination without a meaningful grasp of immunology, modern molecular biology, microbiology and more. Especially when one already knows the answer (RFK Jr. never met a vaccine he didn’t loathe) It’s way too easy to find correlations (or seeming associations that turn out not to exist at all), then leap to claims of causation that don’t hold up.

That’s happened a lot before AI came on the scene, of course. But given this demonstration of current LLMs’ deeply flawed bullshit detectors (Starfleet Academy? I mean, really…) it seems likely that this technology will only make it enhance the vaccine denialists’ ability to reinforce their believers’ confidence in things that ain’t so. Imagine how many “studies” that prove vaccines are bad could be generated to “teach” our LLM friends—who could then deliver that good (bad) news to those on the hunt for reasons to avoid the greatest life-saving technology humankind has ever gifted itself.

I haven’t got any obvious solution—except, perhaps, to hold AI providers responsible for harms born of their products functioning as designed. But the real issue is human: how to persuade our society to accept the division of labor required to live together.

We have to recognize that we can’t all be brain surgeons, or plumbers–or vaccinologists –and that those who are do in fact have distinct expertise that the rest of us can depend on.

And with that…

This thread is as open as current AI is gullible.

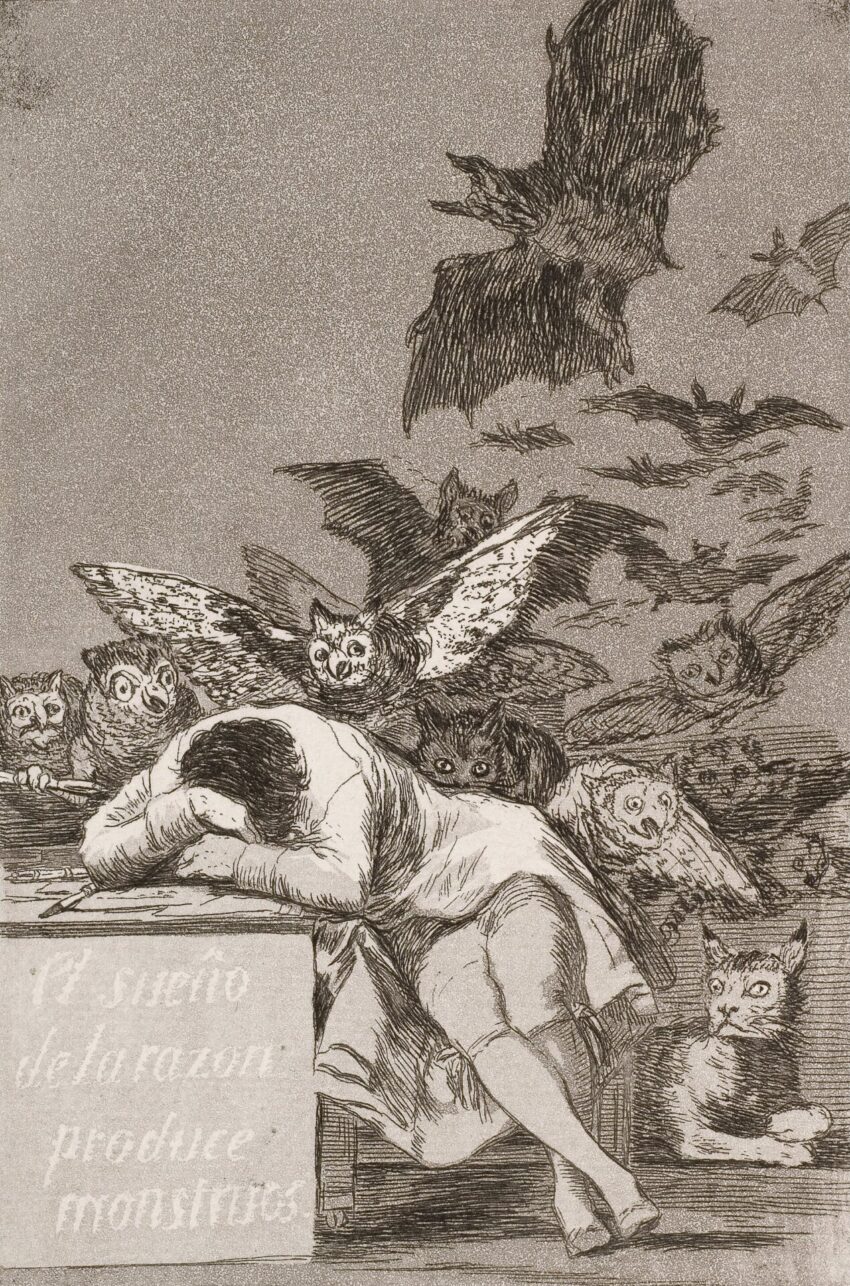

Image: Francisco Goya, The Sleep of Reason Produces Monsters, c. 1799