Just in time for the latest, greatest Shitgibbon pursuit of all those not-good-people who got to vote for his opponent, Maggie Koerth-Baker brings the hammer down. She’s written an excellent long-read over at Five Thirty Eight on what went wrong in the ur-paper that has fed the right wing fantasy that a gazillion undocumented brown people threw the election to the popular-vote winner, but somehow failed to actually turn the result.

The nub of the problem lies with a common error in data-driven research, a failure to come to grips with the statistical properties — the weaknesses — of the underlying sample or set. As Koerth-Baker emphasizes this is both hardly unusual, and usually not quite as consequential as it was when and undergraduate, working with her professor, used found that, apparently, large numbers of non-citizens 14% of them — were registered to vote.

There was nothing wrong the calculations they used on the raw numbers in their data set — drawn from a large survey of voters called the Cooperative Congressional Election Study. The problem, though, was that they failed fully to handle the implications of the fact that the people they were interested in, non-citizens, were too small a fraction of the total sample to eliminate the impact of what are called measurement errors. Koerth-Baker writes:

Non-citizens who vote represent a tiny subpopulation of both non-citizens in general and of the larger community of American voters. Studying them means zeroing in on a very small percentage of a much larger sample. That massive imbalance in sample size makes it easier for something called measurement error to contaminate the data. Measurement error is simple: It’s what happens when people answer a survey or a poll incorrectly.1 If you’ve ever checked the wrong box on a form, you know how easy it can be to screw this stuff up. Scientists are certainly aware this happens. And they know that, most of the time, those errors aren’t big enough to have much impact on the outcome of a study. But what constitutes “big enough” will change when you’re focusing on a small segment of a bigger group. Suddenly, a few wrongly placed check marks that would otherwise be no big deal can matter a lot.

This is what critics of the original paper say happened to the claim that non-citizens are voting in election-shaping numbers:

Of the 32,800 people surveyed by CCES in 2008 and the 55,400 surveyed in 2010, 339 people and 489 people, respectively, identified themselves as non-citizens.2 Of those, Chattha found 38 people in 2008 who either reported voting or who could be verified through other sources as having voted. In 2010, there were just 13 of these people, all self-reported. It was a very small sample within a much, much larger one. If some of those people were misclassified, the results would run into trouble fast. Chattha and Richman tried to account for the measurement error on its own, but, like the rest of their field, they weren’t prepared for the way imbalanced sample ratios could make those errors more powerful. Stephen Ansolabehere and Brian Schaffner, the Harvard and University of Massachusetts Amherst professors who manage the CCES, would later say Chattha and Richman underestimated the importance of measurement error — and that mistake would challenge the validity of the paper.

Koerth-Baker argues that Chatta (the undergraduate) and Richman, the authors of the original paper are not really to blame for what came next — the appropriation of this result as a partisan weapon in the voter-suppression wars. She writes, likely correctly in my view, that political science and related fields are more prone to problems of methodology, and especially in handling the relatively new (to these disciplines) pitfalls of big, or even medium-data research. The piece goes on to look at how and why this kind of not-great research can have such potent political impact, long after professionals within the field have recognized problems and moved on. A sample of that analysis:

This isn’t the only time a single problematic research paper has had this kind of public afterlife, shambling about the internet and political talk shows long after its authors have tried to correct a public misinterpretation and its critics would have preferred it peacefully buried altogether. Even retracted papers — research effectively unpublished because of egregious mistakes, misconduct or major inaccuracies — sometimes continue to spread through the public consciousness, creating believers who use them to influence others and drive political discussion, said Daren Brabham, a professor of journalism at the University of Southern California who studies the interactions between online communities, media and policymaking. “It’s something scientists know,” he said, “but we don’t really talk about.”

These papers — I think of them as “zombie research” — can lead people to believe things that aren’t true, or, at least, that don’t line up with the preponderance of scientific evidence. When that happens — either because someone stumbled across a paper that felt deeply true and created a belief, or because someone went looking for a paper that would back up beliefs they already had — the undead are hard to kill.

There’s lots more at the link. Highly recommended. At the least, it will arm you for battle w. Facebook natterers screaming about non-existent voter fraud “emergency.”

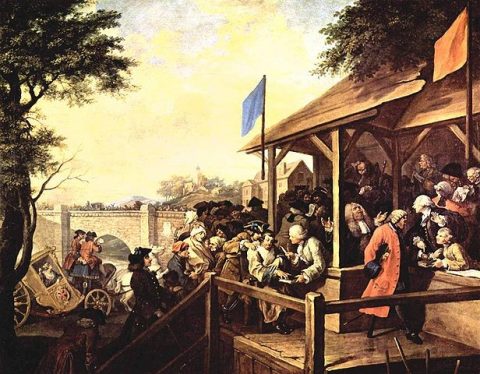

Image: William Hogarth, The Humours of an Election: The Polling, 1754-55

The Great Vote Fraud Data Mistake…A Cautionary TalePost + Comments (117)