This is the 2nd installment of this story from BretH about being a motorcycle messenger in the 1980s. In case you missed the first one, just click on the tag and you’ll see it. Even though I was never a motorcycle messenger, it’s so fun to be taken back in time.

I had to learn how to ride. Really ride. I learned how to split lanes properly, and how, if the cars were really close together you could waggle your handlebars at just the right time to clear the mirrors of the cars on either side. This is when I fully understood just how perfect Nick’s motorcycle was, with its slender engine, and his handlebars narrowed by a few inches for more clearance.

One time when he saw the rear of my bike skid a little Nick leaned over and said “take a look at my front tire”. I had not realized it before but the tread on the front tire of his (and every experienced rider’s motorcycle) was severely scalloped from extremely heavy usage of the front brake. This was because it was the absolute fastest way to stop—good riders used it almost exclusively on dry pavement as the weight shift forward compressed the front forks, sending so much force down through the wheel that it was almost impossible to get the front tire to skid. They only used the rear brake for the final few feet of a stop, or when the road conditions were slippery. I practiced stopping by jamming on my front brake just like that until I lost the fear of skidding and eventually my front tire took on the same appearance.

I learned that riding in the rain was not all that much different from riding in the dry if the road conditions were normal—but a rider needed to observe the road ahead like a hawk. Were there depressions from heavy trucks that had collected water? Was it the first rain after a week or so, when the oil that had collected in the pavement was pushed up top? Was the road even slightly discolored? Was it at all shiny? Was there gravel? Nick informed me that Metrobusses were notorious for dumping a little slick fluid when cornering in traffic circles, and to watch out for that. As it was early summer, I did not learn at that time how to ride in the snow (indeed I wasn’t sure how it was really possible) but with winter a natural part of the cycle of seasons, and messengering being a year-round job that time would come, as we will see.

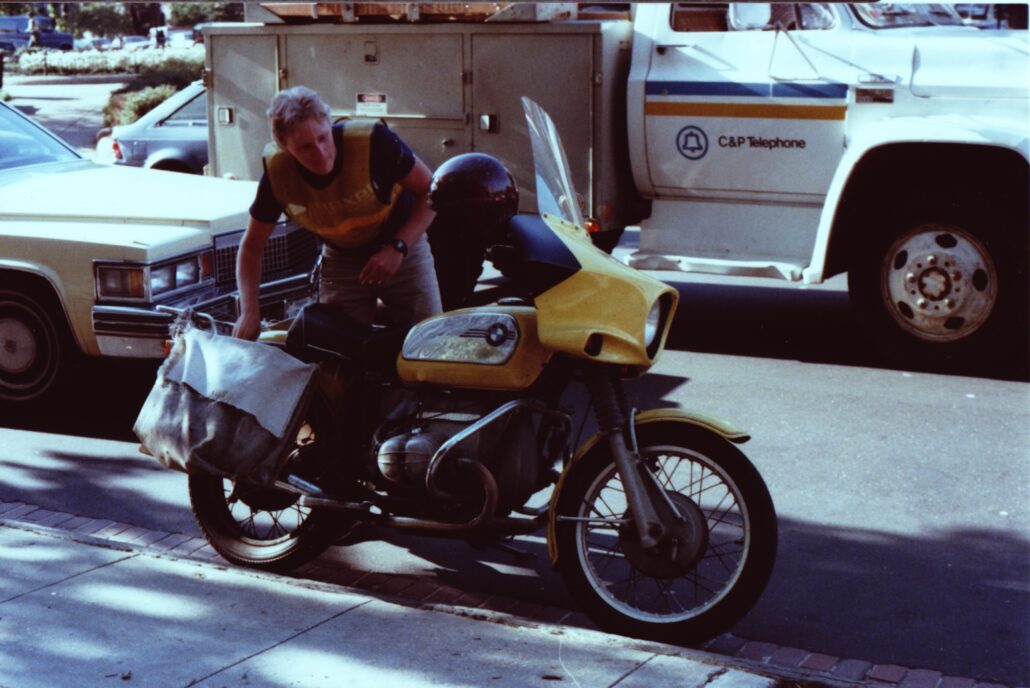

I learned that in order to make the fastest turn a good rider needed to briefly twitch their handlebars in the opposite direction, causing the bike to start leaning for the best grip, then continue the turn normally. I recall one day reaching a traffic circle heading into Georgetown and seeing a rider on an older but bright yellow BMW motorcycle pass me, do the little twitch to get the lean started then absolutely fly around the circle, with the left cylinder barely above the pavement, monitoring the angle of the lean by lightly dragging his left foot on the pavement. I would come to know that rider and those old BMWs very, very well, but that comes later in this story.

There were lessons to learn about other drivers, especially taxis. You noticed the turn of a head as someone glanced behind before making an abrupt turn. You knew that a hand up on the sidewalk meant that the first taxi around would make a beeline for that person no matter what lane they were in. You knew how mad drivers got when you split lanes between them as they were stuck in line at a red light but that soon they were far behind and in no position to do anything about it.

There was one time however when Pete and I, waiting at a light, heard loud honking and cursing coming from the cross street. One of our fellow riders had done something to absolutely enrage a driver who was standing up in his convertible shaking his fist and sputtering in rage. In some real danger of getting run down, or at least into a fight, the rider looked up and saw us, made a quick turn against the red light and nestled between us and from the newfound position of safety mercilessly mocked the motorist. Good times.

I had to learn something I had never considered up to that point: where the heck do you park your motorcycle 50 times a day to go inside a building to pick up or deliver a package, when the street parking was invariably full? The answer was, like so many things on the job, a delicate balance of convenience and legality and respectfulness of others.

The best parking space was next to the building just off an access road crossing the sidewalk leading to underground parking—out of the way of pedestrians and far from the street and the watchful eyes of the police and parking enforcement. The next best, and the one used most often, was to turn into one of those parking access ramps then do a little pirouette to park the bike right next to the street on the little semicircle of pavement there. The spot of last resort was between parked cars as one could never know when an unhappy driver might give your bike a little nudge on the way out of the smaller space you had allowed him.

Using common sense and respect when parking was even more important among the various buildings of the Senate and House of Representatives. Messengers and the Capitol Police had an uneasy truce acknowledging that there were almost no “official” spaces for us to park, but because we were part of the necessary machinery keeping the offices humming, there were unofficial spots that everyone knew were OK, each of which had to be memorized. We were always somewhat on tenterhooks on Capitol Hill as we almost always went well over the speed limit, were somewhat cavalier towards “No Turn On Red” signs, and there were so many police around of various kinds: Park Police, DC Police, Capitol Police, GSA Police and even others.

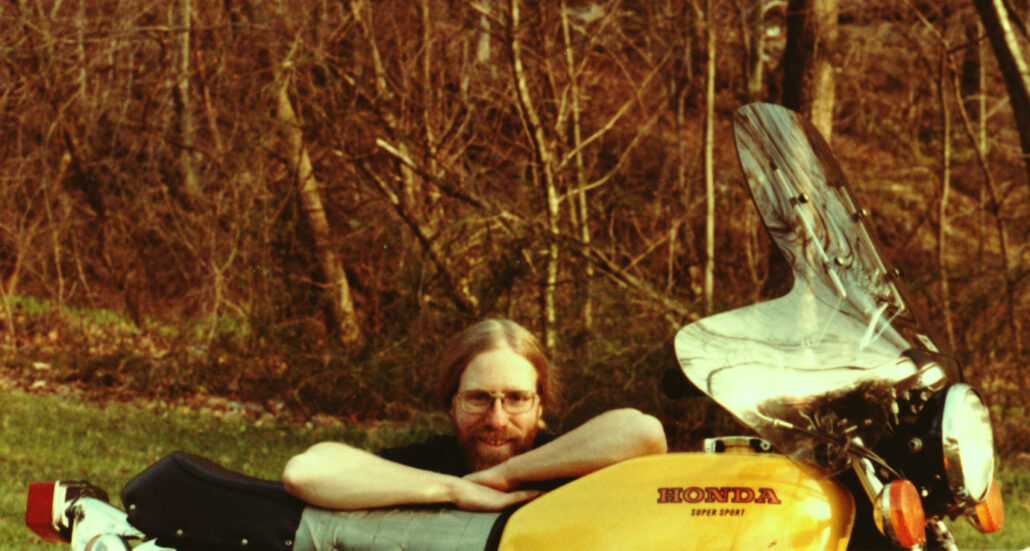

To this day I maintain that motorcycle messengers, at least those that have made it through a year or so, are the very best riders there are. The sheer amount of hours we spent on our bikes in one year—in all kinds of traffic and weather conditions—was far, far more than the recreational rider might see in an entire lifetime. We were good, really good, and we knew we were part of a somewhat elite group. I could not escape the knowledge, however, that as good as we were, we also had to be somewhat lucky. On any given day, a mistake by a driver might end our career—or life, no matter how well we rode. Amazingly enough, although we all knew of incidents and accidents I do not recall any rider being killed while I was on the motorcycle.

Then there was everything we needed to learn about the job itself. Here I will take a minute to talk about Speed Service and what made it somewhat unique among messenger, I mean courier companies, because Big John took pains to call his riders that. He tried hard to give his company a sense of being exclusive, to distinguish it from the multitude of other delivery services in DC at that time.

While most motorcycle and bicycle messengers wore whatever they wanted and indeed some riders looked like someone you would not want to meet in a dark alley, Speed Service riders were issued a gray button-down shirt, with tidy green patches on each shoulder with the Speed Service logo, and were expected to wear black pants to complete the outfit. Motorcyclists wore “shorty” white helmets with a black visor, like the police riders, and our radios were not clipped at our waist but slung over our shoulder with a microphone up near our mouth. At a quick glance we were indistinguishable from the many police services littering the DC area and that was likely part of the point of it: to generate less official scrutiny as we went about our business. Pete actually put this to good use one day when, after being cut off by an out-of-town motorist, he ordered the frightened driver to “pull over and wait here until I get back” before he spun off.

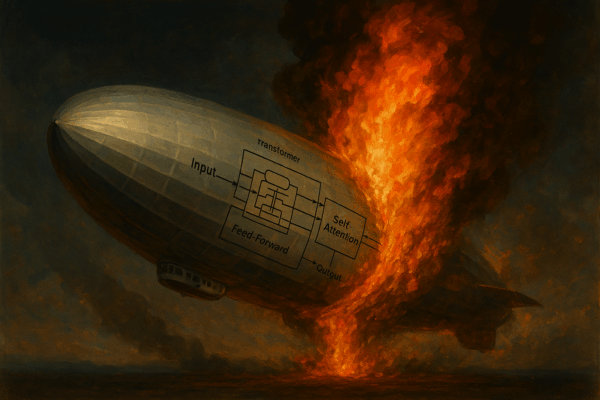

The job was at its core not a difficult one. Customers called the office needing a delivery and we would be dispatched to pick it up from one location and drop it off at its destination. But the simplicity of the task belied the complexity of making that happen efficiently. First off we needed the aforementioned 2-way radios. While it was possible to do the job using telephones (borrowing the phone in an office, and using pay phones, which were ubiquitous in those times), the inability to contact a messenger between calls to adjust the route or to add additional jobs made radios a necessity for any self-respecting company. I well remember my first few days and even weeks listening to the crackling chatter on the radio and thinking I would never get good at deciphering it. However just using the radio and indeed hearing it all day as other riders called in and were called by the dispatcher trained my ears to the point where the transmission could break up and I could be pretty sure of what was being said.

Riders were assigned a number, so the radio would come alive in little bursts all day long: “54”. “54 go”. “54, 10-7”. When I was a little younger I used to make fun of this during the CB radio craze in the ’70s: “Ten-four good buddy”. But there was a purpose behind these codes: conserving precious airtime while unambiguously conveying a message. I learned 10-7 meant “I am at the pickup spot or am ready to hear the instructions about the pickup”. 10-8 meant “I have picked up the package and am ready for more instructions”. 10-4 of course meant “I understand”, 10-10 meant “I have completed everything I had to do for the day”, and 10-19 meant the glorious “You’re done for the day, return to base”. With practice I was able to follow other messengers as they went about their runs, knowing when I might run into them to say hi or to share a little weed, or to just share some time with them while we waited for a new assignment.

Our customers were law offices, lobbying groups, major corporations, trade organizations and the like, and we carried literally anything that needed to get from one place in the city and suburbs to another in a short amount of time. Letters, large envelopes, artwork, court filings, airline tickets, law library books, press releases, gifts for Congresspeople and so much more all found their way into our saddlebags at one time or another.

A special set of Speed Service customers were the photography offices of The Washington Post, the Associated Press, and United Press International. One of our more interesting tasks was to go where news was happening and take film canisters directly from the press photographers to their offices to be processed and hopefully put on the front page of the newspapers. One time I was waiting for a photographer at the site of a shooting and standoff and was thrilled when he had to go use the restroom and told me to keep watch with his camera, mounted on a tripod with a huge telephoto lens, and take any photos I could if something happened while he was away.