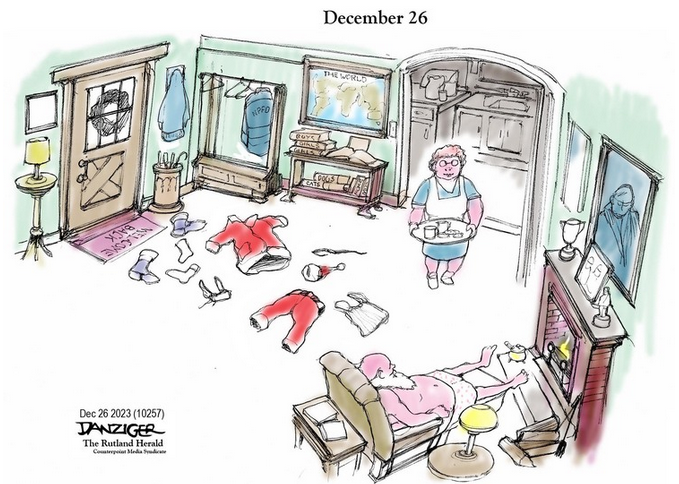

“Ready or not, here I come.” That was from a children’s game.

Now, it’s the reality of AI. So ready or not, here it comes. With AI, we have a rocky road ahead.

If I were given the choice, I would probably stop the AI train because I think it can do more harm than good. Feel free to tell me that I’m wrong.

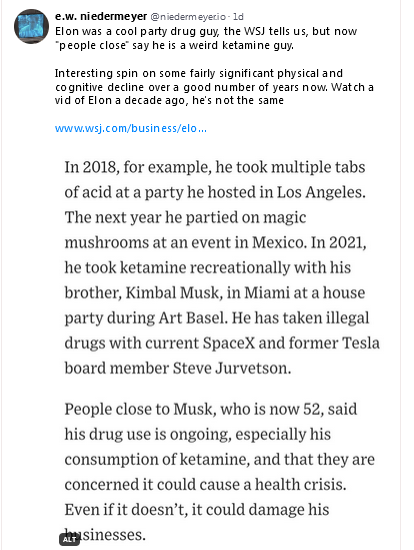

NEWS: Robocall (AI deepfake) of Joe Biden tells NH Democrats *not* to vote on Tuesday.

We’ve been working with US officials on the expected surge in deepfakes.

This is just the beginning. https://t.co/LHg71ctqUE

— Miles Taylor (@MilesTaylorUSA) January 22, 2024

The NYT gift link below takes you to a test of 10 images that you determine are either real or AI. I wonder how well people can do with voices.

The most interesting thing in the article, to me, is that results showed that higher confidence in the person’s answer correlated with a higher chance of being wrong. Yikes!

Anyway, take the quiz, or just read the article at the link.

(New York Times gift link) h/t dnfree

Distinguishing between a real versus an A.I.-generated face has proved especially confounding.

Research published across multiple studies found that faces of white people created by A.I. systems were perceived as more realistic than genuine photographs of white people, a phenomenon called hyper-realism.

Researchers believe A.I. tools excel at producing hyper-realistic faces because they were trained on tens of thousands of images of real people. Those training datasets contained images of mostly white people, resulting in hyper-realistic white faces. (The over-reliance on images of white people to train A.I. is a known problem in the tech industry.)

The confusion among participants was less apparent among nonwhite faces, researchers found.

Participants were also asked to indicate how sure they were in their selections, and researchers found that higher confidence correlated with a higher chance of being wrong.

“We were very surprised to see the level of over-confidence that was coming through,” said Dr. Amy Dawel, an associate professor at Australian National University, who was an author on two of the studies.

“It points to the thinking styles that make us more vulnerable on the internet and more vulnerable to misinformation,” she added.

A.I. systems had been capable of producing photorealistic faces for years, though there were typically telltale signs that the images were not real. A.I. systems struggled to create ears that looked like mirror images of each other, for example, or eyes that looked in the same direction.

But as the systems have advanced, the tools have become better at creating faces.

The hyper-realistic faces used in the studies tended to be less distinctive, researchers said, and hewed so closely to average proportions that they failed to arouse suspicion among the participants. And when participants looked at real pictures of people, they seemed to fixate on features that drifted from average proportions — such as a misshapen ear or larger-than-average nose — considering them a sign of A.I. involvement.

What do you believe when you can’t believe your own eyes?

Open thread.